Title: Learning ArcGIS Geodatabases

Author: Hussein Nasser

Publisher: Packt Publishing

Year: 2014

Aimed at: ArcGIS – beginner to advanced

Purchased from: www.packtpub.com

After using MapInfo for four years my familiarity with ArcGIS severely declined. The last time I utilised ArcGIS in employment shapefiles were predominantly used but I knew geodatabases were the way forward. If they were going to play a big part in future employment it made sense to get more intimate with them and learn their inner secrets. This compact eBook seemed like a good place to start…

The first chapter is short and sweet and delivered at a beginner’s level with nice point to point walkthroughs and screenshots to make sure you are following correctly. You are briefed on how to design, author, and edit a geodatabase. The design process involves designing the schema and specifying the field names, data types, and the geometry types for the feature class you wish to create. This logical design is then implemented as a physical schema within the file geodatabase. Finally, we add data to the geodatabase through the use of editing tools in ArcGIS and assign attribute data for each feature created. Very simple stuff so far that provides a foundation for getting set-up for the rest of the book.

The second chapter is a lot bulkier and builds upon the first. The initial task in Chapter 2 is to add new attributes to the feature classes followed by altering field properties to suit requirements. You are introduced to domains, designed to help you reduce errors while creating features and preserve data integrity, and subtypes. We are shown how to create a relationship class so we can link one feature in a spatial dataset to multiple records in a non-spatial table stored in the geodatabase as an object table. The next venture in this chapter takes a quick look at converting labels to an annotation class before ending with importing other datasets such as shapefiles, CAD files, and coverage classes and integrating them into the geodatabase as a single point of spatial reference for a project.

Chapter 3 looks at improving the rough and ready design of the geodatabase through entity-relationship modelling, which is a logical diagram of the geodatabase that shows relationships in the data. It is used to reduce the cost of future maintenance. Most of the steps from the first two chapters are revisited as we are taken through creating a geodatabase based on the new entity relationship model. The new model reduces the number of feature classes and improves efficiency through domains, subtypes and relationship classes. Besides a new train of thought on modelling a geodatabase for simplicity the only new technical feature presented in the chapter is enabling attachments in the feature class. It is important to test the design of the geodatabases through ArcGIS, testing includes adding a feature, making use of the domains and subtypes, and test the attachment capabilities to make sure that your set-up works as it should.

Chapter 4 begins with the premise of optimizing geodatabases through tuning tools. Three key optimizing features are discussed; indexing, compressing, and compacting. The simplicity of the first three chapters dwindles and we enter a more intermediate realm. For indexing, how to enable attribute indexing and spatial indexing in ArcGIS is discussed along with using indexes effectively. Many of you may have heard about database indexing before, but the concept of compression and compacting in a database may be foreign. These concepts are explored and their effective implementation explained.

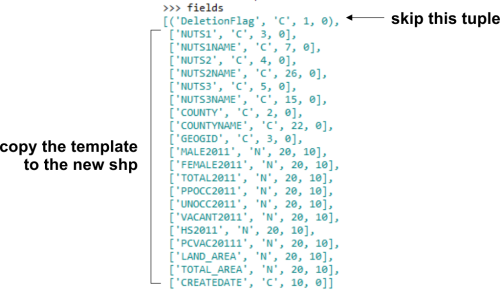

The first part of the fifth chapter steps away from the GUI of ArcGIS for Desktop and ArcCatalog and switches to Python programming for geodatabase tasks. Although laden with simplicity, if you have absolutely no experience with programming or knowledge of the general concepts well then this chapter may be beyond your comprehension, but I would suggest performing the walkthroughs as it might give you an appetite for future programming endeavours. We are shown how to programmatically create a file geodatabase, add fields, delete fields, and make a copy of a feature class to another feature class. All this is achieved through Python using the arcpy module. Although aimed at highlighting the integration of programming with geodatabase creation and maintenance the author also highlights how programming and automation improves efficiency.

The second part of the chapter provides an alternative to using programming for geoprocessing automation in the form of the Model Builder. The walkthrough shows us how to use the Model Builder to build a simple model to create a file geodatabase and add a feature class to it.

The final chapter steps up a level from file geodatabases to enterprise geodatabases.

“An enterprise geodatabase is a geodatabase that is built and configured on top of a powerful relational database management system. These geodatabases are designed for multiple users operating simultaneously over a network.”

The author walks us through installing Microsoft SQL Server Express and lists some of the benefits of employing an enterprise geodatabase system. Once the installation is complete the next step is to connect to the database from a local and remote machine. Once connections are established and tested an enterprise geodatabase can be created to and its functionality utilised. You can also migrate a file geodatabase to and enterprise geodatabase. The last part of Chapter 6 shows how privileges can be used to grant users access to data that you have created or deny them access. Security is an integral part of database management.

Overall Verdict: for such a compact eBook (158 pages) it packs a decent amount of information that provides good value for money, and it also introduces other learning ventures that come part and parcel with databases in general and therefore geodatabases. Many of the sections could be expanded based on their material but the pagination would then increase into many hundreds (and more) and beyond the scope of this book. The author, Hussein Nasser, does a great job with limiting the focus to the workings of geodatabases and not veering off on any unnecessary tangents. I would recommend using complimentary material to bolster your knowledge with regards to many of the aspects such as entity-relationship diagrams, indexing (both spatial and non-spatial), Python programming, the Model Builder, enterprise geodatabases and anything else you found interesting that was only briefly touched on. Overall the text is a foundation for easing your way into geodatabase life, especially if shapefiles are still the centre of you GIS data universe.